Introduction: A Real Case, Not a Hypothetical

In recent years, academic institutions have increasingly engaged in large-scale internet measurement research. One such case is a publicly documented web and TLS scanning project conducted under the name of the University of Georgia, explained through a scan-notification page intended to justify unsolicited connections made to servers across the internet.

The researchers describe their activity as educational, non-intrusive, and conducted in the interest of science. Yet a fundamental ethical question remains unresolved:

Can research be ethical if it is conducted without prior consent, even when labeled “educational”?

This article argues that it cannot.

Who Is Involved and What Is Being Done

According to the public scan notice, the project is affiliated with UGA’s School of Computing and lists specific individuals responsible for the activity, including Roberto Perdisci and graduate researcher Xingda Bao.

The activity involves automated scanning of publicly reachable servers across the internet, collecting information such as TLS certificates, HTTP headers, and landing-page content. The stated goal is to analyze the security ecosystem and identify trends related to certificates and potential misuse.

The researchers emphasize that:

-

Only publicly accessible data is collected

-

No exploitation is intended

-

An opt-out mechanism is available

None of these claims resolve the core ethical issue: participation is imposed, not agreed to.

The Central Ethical Issue: No Prior Consent

The defining problem is not the technology, nor even the data itself. It is the absence of permission.

Website owners are included automatically, without notice, consent, or agreement. Public accessibility is treated as implicit approval for automated research.

That assumption is ethically unsound.

Public exposure does not imply consent. Silence does not imply consent. Being reachable does not imply permission to be studied, catalogued, or analyzed at scale.

Why “Opt-Out” Is Not Consent

The scan notice provides an opt-out option: if you do not want to be scanned, you may contact the researchers and request exclusion.

This is not consent. It is retroactive damage control.

Ethical consent must be prior, informed, and voluntary. Opt-out fails on all three fronts. The scan has already occurred. The burden shifts entirely to the unaware target to discover the practice and object.

This is not a choice — it is a procedural afterthought.

“Educational Purpose” Does Not Make an Act Ethical

The primary defense offered is education.

But explanation does not equal authorization.

If stating “this is for education” were sufficient, then logically:

-

A chemistry lecturer could manufacture illegal drugs “for teaching”

-

A horticulture instructor could grow marijuana “for educational research”

-

A researcher could bypass safeguards simply by publishing a justification page afterward

In every one of these cases, society already understands the boundary:

Intent does not override consent, law, or ethics.

Education demands higher moral standards, not exceptions.

There Are Ethical Alternatives — and They Are Easy

If the purpose is genuinely educational or methodological, there is no necessity to involve uninformed, non-consenting parties worldwide.

Ethical alternatives are readily available:

-

Dummy websites can be created in minutes

-

Synthetic environments can be generated using cloud platforms

-

AI agents can produce realistic but non-real datasets

-

Universities already operate their own domains and infrastructure

Researchers can:

-

Scrape intentionally created test sites

-

Use school-owned systems

-

Work with explicitly consenting participants

When non-consensual global scanning is chosen despite these options, the issue is no longer technical feasibility. It is choice.

Why Global, Non-Consensual Scraping Is Indefensible

This practice fails a basic moral test:

-

The subjects did not agree

-

The subjects receive no benefit

-

The subjects bear the operational and security risk

-

The researchers bear none of the cost

That is not collaboration. It is extraction.

Taking data without permission — when permission could reasonably be obtained or avoided — is morally indistinguishable from stealing, regardless of motive.

If Cambridge Analytica Was Wrong, This Should Concern You Too

Readers should ask themselves a simple question:

If the Cambridge Analytica scandal was unethical, why is this different?

In that case, data was collected and repurposed without meaningful user consent. The public reaction was clear: lack of consent mattered more than claimed intent.

The same was true in the Facebook data misuse scandals. Users were told data was “public” or “available,” yet society overwhelmingly rejected the idea that availability equals permission.

The pattern is the same:

-

Data collected without consent

-

Justified after the fact

-

Responsibility shifted to the affected party

If we condemn those practices in industry, we cannot excuse them in academia simply because the word “research” is used.

Ethical standards must be consistent — or they are meaningless.

Institutional Trust and Misuse of Resources

Universities benefit from extraordinary public trust. Their names, IP ranges, and infrastructure signal legitimacy and ethical oversight.

When non-consensual scanning is conducted under an academic banner, the institution implicitly endorses — or at least tolerates — the method.

Universities are not neutral platforms. They exist to model integrity and responsibility.

Normalizing “take first, explain later” undercuts that mission.

Lessons From Medical Research Ethics

History shows where this logic leads.

Medical research once justified unethical actions “for science.” Society responded by establishing consent requirements, oversight bodies, and ethical review frameworks.

The lesson was simple and hard-earned:

Good intentions are not enough.

Researchers cannot be the sole judges of what is acceptable.

COVID-19 and Why Oversight Still Matters

There is no conclusive evidence that COVID-19 was deliberately engineered or intentionally released. The World Health Organization has stated that the origins of COVID-19 remain under investigation.

But intent is not the point.

Even accidental harm demands accountability, safeguards, and oversight. The absence of malice does not remove ethical responsibility.

When Research Methods Resemble Threat Activity

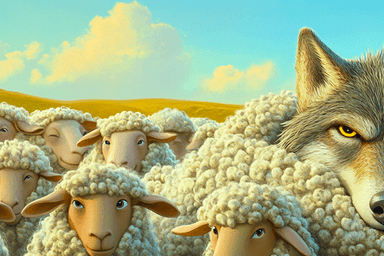

From the target’s perspective, automated probing without consent looks identical to hostile reconnaissance.

Ethics are judged by actions, not titles.

If research methods resemble those of threat actors, trust collapses — regardless of the justification offered afterward.

What Ethical Internet Research Should Look Like

Ethical research is harder and more limited — and that is its strength.

It requires:

-

Consent-first approaches

-

Advance transparency

-

Respect for refusal by default

-

Acceptance that not all data should be collected

Ethical limits are not obstacles. They are safeguards.

Conclusion: Ethics Before Convenience

Education does not justify taking without asking.

Consent obtained after the fact is not consent. Transparency without permission is not ethics — it is public relations.

If society condemns non-consensual data collection by corporations, it must hold academia to the same standard.

If research cannot be conducted ethically, then it should not be conducted at all.

"Why We Wrote This" We discovered this scanning activity firsthand — it was detected targeting a user's website hosted on an s͛Card custom domain. Because s͛Card custom domains route through our infrastructure, we're able to monitor and flag suspicious activity like unauthorized academic scraping on behalf of our users. This added layer of protection is built into every s͛Card custom domain, giving users visibility and defense against threats they might never notice on their own.